Generate Alt Text with OpenAI Vision shortcut

Use Apple Shortcuts to automatically generate an image description using OpenAI’s API.

This shortcut helps you to generate quality image descriptions to use as alt text for images you upload to the internet. Alt text helps people using screen readers to understand the context of an image, even if they can’t see it.

You can pass image files or image URLs (for .png, jpg, .jpeg, .webp, or .gif images) as input to this shortcut, and it will send them through OpenAI’s Vision API along with a specific prompt to generate unique descriptions for each image, including any text found in it.

It is intended that this image description be used as a starting point for your alt text. You should review it, and edit or add to it as needed.

The shortcut can work particularly well if you use it as a function within another shortcut to format the image’s description into the HTML or Markdown text for the uploaded image.

Please Note: This shortcut uses OpenAI’s GPT-4o model, which is accessed with an API key from accounts set up as Pay-As-You-Go customers who have made a successful payment of $1 or more, or customers who subscribe to ChatGPT Plus. Learn more: https://platform.openai.com/docs/guides/vision

You may have to pay for at least $1 worth of credit and then wait ~30 minutes or so for the API access to kick in. In my experience, it costs about $0.01 per image description that it generates on the pay-as-you-go plan.

I’ve written a bit about this shortcut and it’s methodology here.

Current Prompt

Here’s the prompt that I use to direct Vision into creating image descriptions:

Please create alt text for this image.

If you have any suggestions for improvements, please let me know.

See It in Action

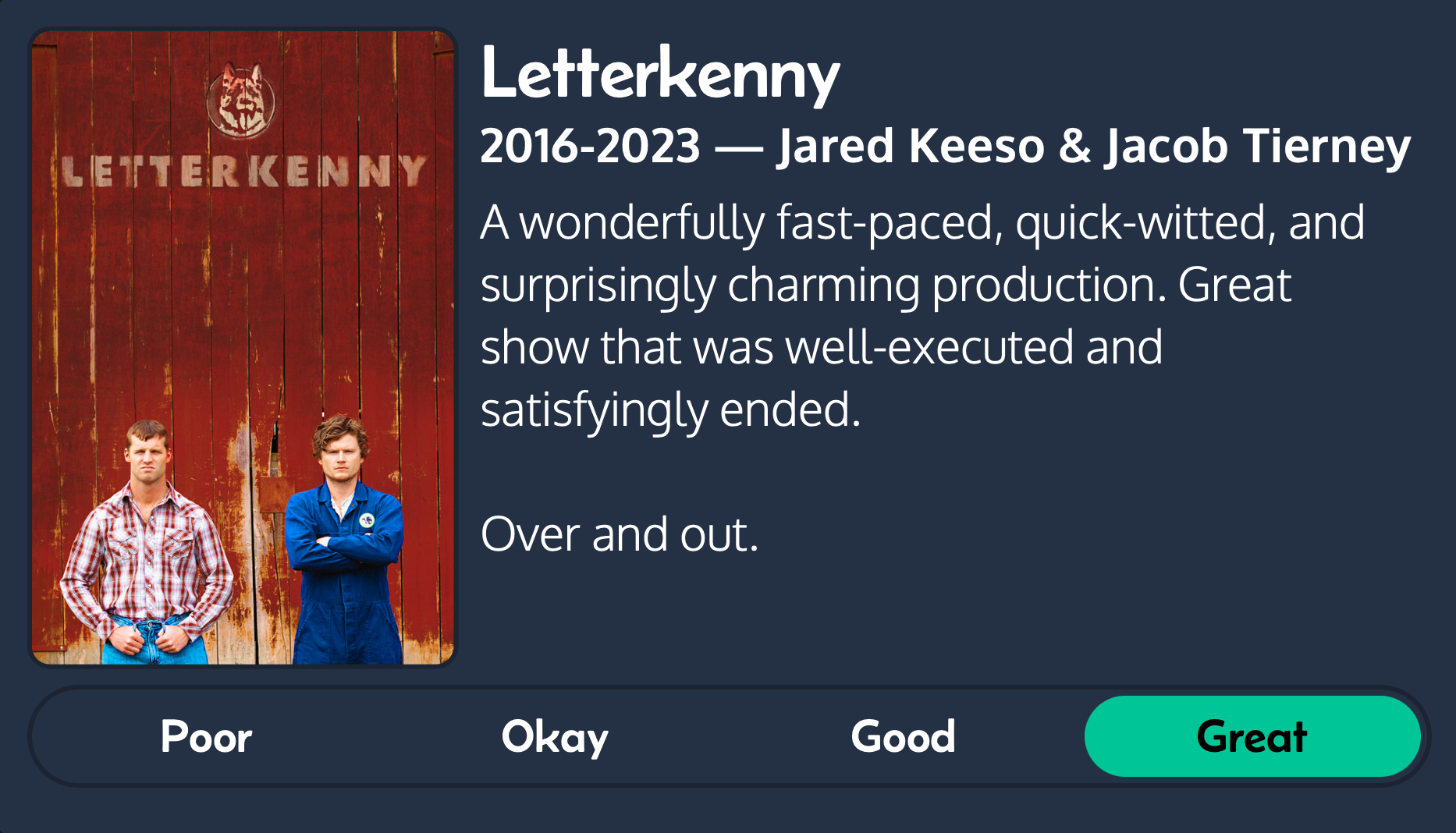

For example, it took this image:

and generated this description:

Two men stand arms crossed in front of a red wall with “LETTERKENNY” written on it. Text: “Letterkenny 2016-2023 — Jared Keeso & Jacob Tierney A wonderfully fast-paced, quick-witted, and surprisingly charming production. Great show that was well-executed and satisfyingly ended. Over and out.” Buttons below read “Poor,” “Okay,” and “Good,” with “Great” selected.

Requirements

- An OpenAI API key with access to the GPT-4 with vision model or later (like

gpt-4o)

Run This Shortcut

You can run this shortcut from its URL (such as from within a note, calendar event, or to-do item):

shortcuts://run-shortcut?name=Generate%20Alt%20Text%20with%20OpenAI%20Vision

Credits

Thanks to TeamData on YouTube for step-by-step instructions on how to successfully organize the data to pass through this shortcut.

Latest Release Notes

Version 1.3 - 2025-02-16

- Added support for getting descriptions for multiple images at once

- Simplified the prompt that directs the LLM to create alt text for the image

- Download version 1.3

Version History

Version 1.2 - 2024-07-24

- Updated to use the

gpt-4omodel (thegpt-4-vision-previewmodel is being deprecated by OpenAI) - Substituted a simple ‘Copy to Clipboard’ as the final action instead of ‘Stop and Output’ because it was creating new text files in the file system for users running it as a Quick Action

- Updated the instructional prompt to try to cut down on unnecessarily wordy transcriptions

- Download version 1.2

Version 1.1 - 2024-01-12

- Added an option to show the generated description at the end — a “test mode”, if you will.

- Added a step to convert .heic images to .jpeg before uploading them since the Vision API doesn’t support those. (Thanks @superdavey@social.lol)

- Download version 1.1

Version 1.0 - 2024-01-08

- Introducing ‘Generate Alt Text with OpenAI Vision’

- Download version 1.0

Thanks for checking out this shortcut! It’s part of the HeyDingus Shortcuts Library. If you’re sharing my shortcuts or modifying them (or see a bug or have a feature request), I’d love to hear from you — please give me a shout! And maybe consider a donation if you find this shortcut fun or useful. Thank you. ✌️