Quick Tip: Get Visual Narration Anywhere There’s Text

It’s no secret that I’ve become enamored with listening to web articles being read aloud to me. I’ve been testing the narration features of Reader, Instapaper, and Omnivore. (Spoiler: Reader’s is good, Instapaper’s is okay, and Omnivore’s is next-level) But what about elsewhere outside of those apps?

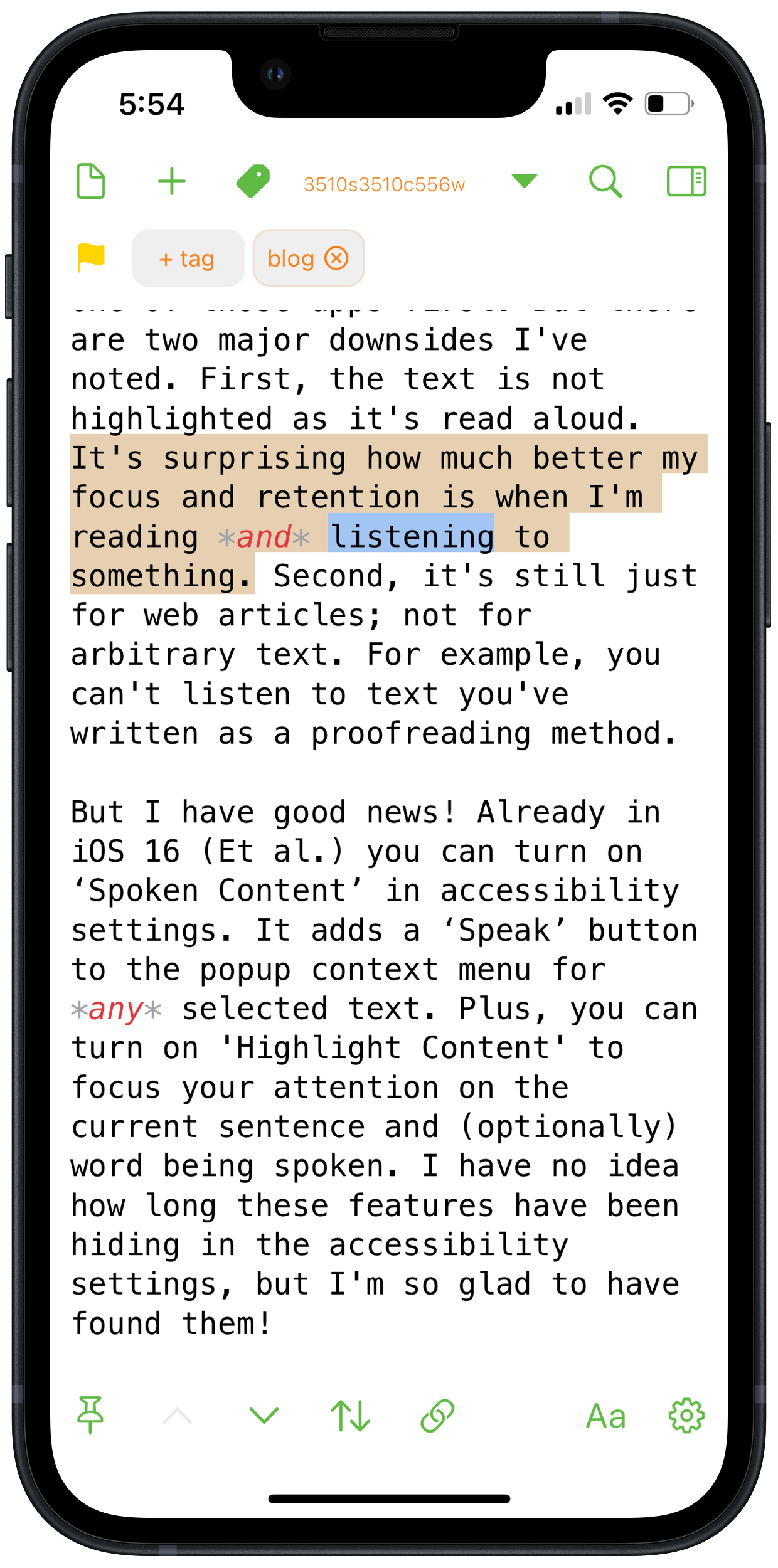

If you’ve been following along with my Beta Impressions thread, you’ll know that we’ll soon be able to enjoy good quality narrations by Siri in Safari Reader. That will make it way easier to get text read out from any webpage without having to send it to one of those apps first. But there are two major downsides I’ve noted. First, the text is not highlighted as it’s read aloud. It’s surprising how much better my focus and retention are when I’m reading and listening to something. Second, it’s still just for web articles; not for arbitrary text. For example, you can’t listen to text you’ve written as a proofreading method.

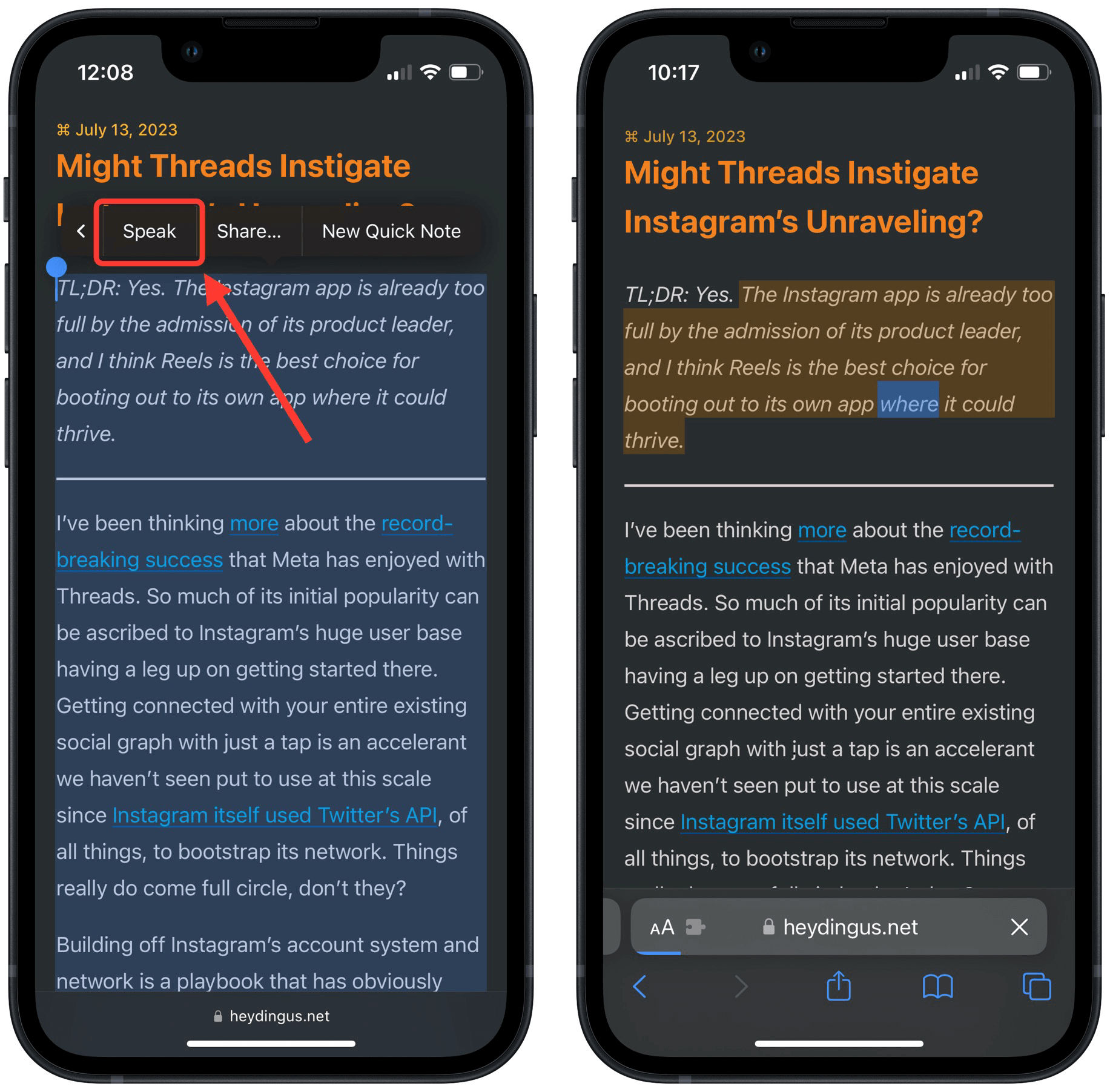

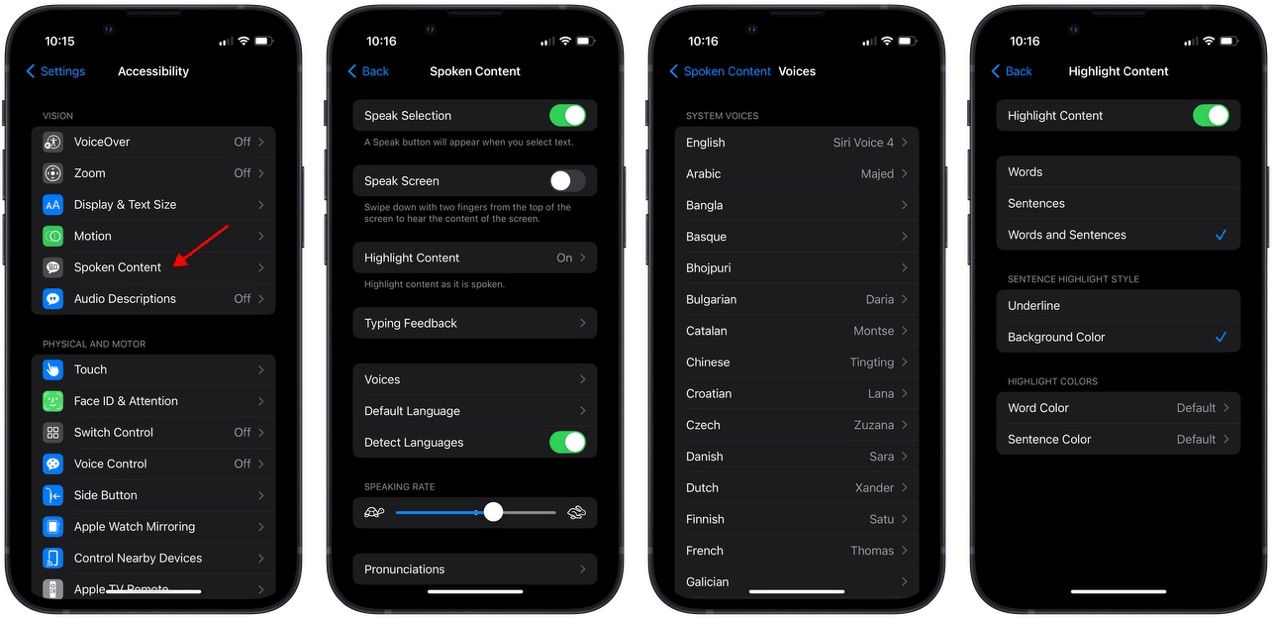

But I have good news! Already in iOS 16 (Et al.) you can turn on ‘Spoken Content’ in accessibility settings. It adds a ‘Speak’ button to the popup context menu for any selected text. Plus, you can turn on ‘Highlight Content’ to focus your attention on the current sentence and (optionally) word being spoken. I have no idea how long these features have been hiding in the accessibility settings, but I’m so glad to have found them!

You can tweak other settings as well. For instance, I prefer the Siri Voice 4, and changed the voice to that one. It’s got great intonation and keeps the voice consistent with Siri’s spoken feedback on my devices. I sped up the voice a little, too.

For a capability that hasn’t had much marketing behind it — at least from what I’ve seen — Spoken Content is quite full-featured! Don’t miss that you can add specific pronunciations for tricky words and names. It’s worth taking some time to explore all the submenus.

Future Feature Requests

I can’t try something without having some thoughts on how it could be improved.

- I’d love to see this added as an API for developers to implement in their apps. Then they wouldn’t have to spend their time building their own voices or text-to-speech engines. Likewise, when Apple’s voices improved, so would any app that uses them for spoken content.

- I was surprised when trying Omnivore’s reading voices at how much of an improved experience it is to have a secondary voice pop in to read block quotes. It really helps clarify the intended meaning of an article to know which text is being quoted. It’s probably tricky to implement for arbitrary selected text — as opposed to specifically audiobooks or articles where the format is more constrained — but would be great if Apple figured it out.